Outcome over output

I discovered the concept of outcome over output in 2014 through Jenny Mar’s work on OOPSI . This led to Teresa Torres awesome work on outcomes and opportunity

This blog post is tagged #qualityhack. A quality hack reminds us not to get overly precious about quality. I know, sounds like heretic talk right? But hear me out.

If you work in the quality space, as either a quality coach or a quality engineer, its easy to think that quality and software testing is the be all and end all of software development. Totally understandable why of course. If we want to excel on something, we need to focus on that skill in a deep way. But this immersion into one specific area comes at a cost. It means sometimes we overemphasise the importance of quality when it comes to solving our consumer’s problems and delivering software.

TripleO reminds me to think about desirable quality related outcome rather than specific quality related outputs.

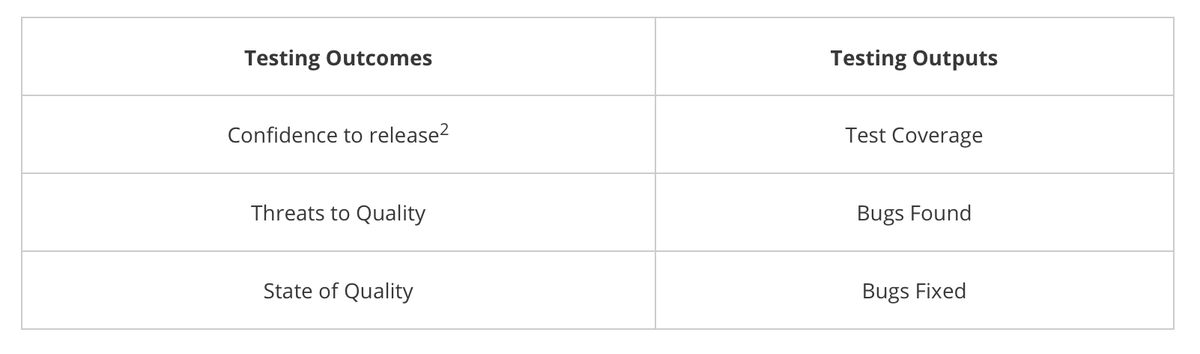

Below, on the right are typical testing outputs1. On the left are testing related outcomes. Think outcome over output. Instead of having conversations about test coverage and bugs found, try focusing on outcomes.

| Testing Outcomes | Testing Outputs |

| Confidence to release2 | Test Coverage |

| Threats to Quality | Bugs Found |

| State of Quality | Bugs Fixed |

Why? Because the people who pay for testing do so to get these outcomes. Understanding intent means you can talk to people with benefit in mind. This is useful when you need to advocate for quality.

Focusing on outcomes gives us a second benefit. When we distance ourselves from outputs and focus on outcomes, magic begins to happen. The mental space this provides, offers alternative solutions to only testing outputs.

Outcome: Confidence to release

Let’s say the outcome from testing is ‘confidence to release’. We know that performing Software Testing is either going to give or takeaway confidence in the state of quality of our product, so what sort of questions might we explore here? Try these:

- Why is confidence to release so important? Confidence to release so that…what?

- What’s an example of confidence to release?

- Imagine if you could no longer perform software testing prior to a release? How else might you gain confidence?

- What safety nets do we have in place so we don’t have to be 100% confident in releasing?

- how do we know our confidence to release is improving?

- Is it worth trading confidence in release with speed of deployment? What is our limit? How far are we prepared to tradeoff on this?

Outcome: Threats to Quality

I’m talking risk here. Here are some avenues you may wish to explore around risk:

- What new product risks exist outside of my expertise?

- What business risks exist that I’m not aware of?

- What is the impact of these risks?

- Are they greater than the product risk?

- What new threats to quality am I not considering?

- What risks am I fixated on and how I can consider other risks?

Outcome: State of Quality

What about state of quality? Instead of having a discussion about bugs found, what if we had a conversation about the state of quality.

- What is quality? What does it mean for us? This release?

- What state of quality are we aspiring to? What is good enough?

- How will we know we have this state of quality?

- Imagine if you could no longer perform software testing prior to a release? How else might we see the state of quality?

- Whats our minimum state of quality?

- What absolutely must not fail?

When we stop being fixated on one solution, when we stop trying to achieve perfection in our software testing we allow alternative approaches open up to us.

Next #qualityhack, focusing on quality outcomes.

1The intangibles are required to make these output good . For instance, testing skill is required and has a significant impact on outputs. These are not the focus of this blog post.

2 I’m using term release loosely here. You could interplay with deliver/deploy too.

Comments ()