How do you do your testing?

Here's my advice. Stop thinking about testing in phases and begin thinking of testing as an activity. Something done in parallel to your coding instead of after your coding.

One trap I fall into is assuming folks have the same testing knowledge and skill level as I do. Which is crazy and totally unfair. I've been working and pioneering ideas in software testing for my fairly long professional career. Why on earth would I assume that others who have less experience or who work outside this field have the same depth of experience and understanding of software testing that I have?

This is why, in the nicest possible way, I often advise quality coaches who are starting with a new team to assume the team knows next to nothing about software testing. Begin with the basics of software testing and then work upwards in knowledge and skill. You'll soon discover if your premise is incorrect and then can adjust accordingly.

Here's an example.

As an experienced software tester, I push to include my testing as early and as often as possible. I know the benefits that this brings to the quality of the overall product. I offer to take releases early, unfinished even! Finding bugs early increases release confidence and minimises surprises late in the cycle. It's the 'no brains' move any experienced software tester will try and make.

Experience tells me many software engineers think differently about when and how to do software testing. Often, they think of any testing outside of unit testing as a phase to be performed once a number of stories are done.

And why wouldn't they? This is the process that they've had when working with software testers. Why would it be any different now they are responsible for the software testing?

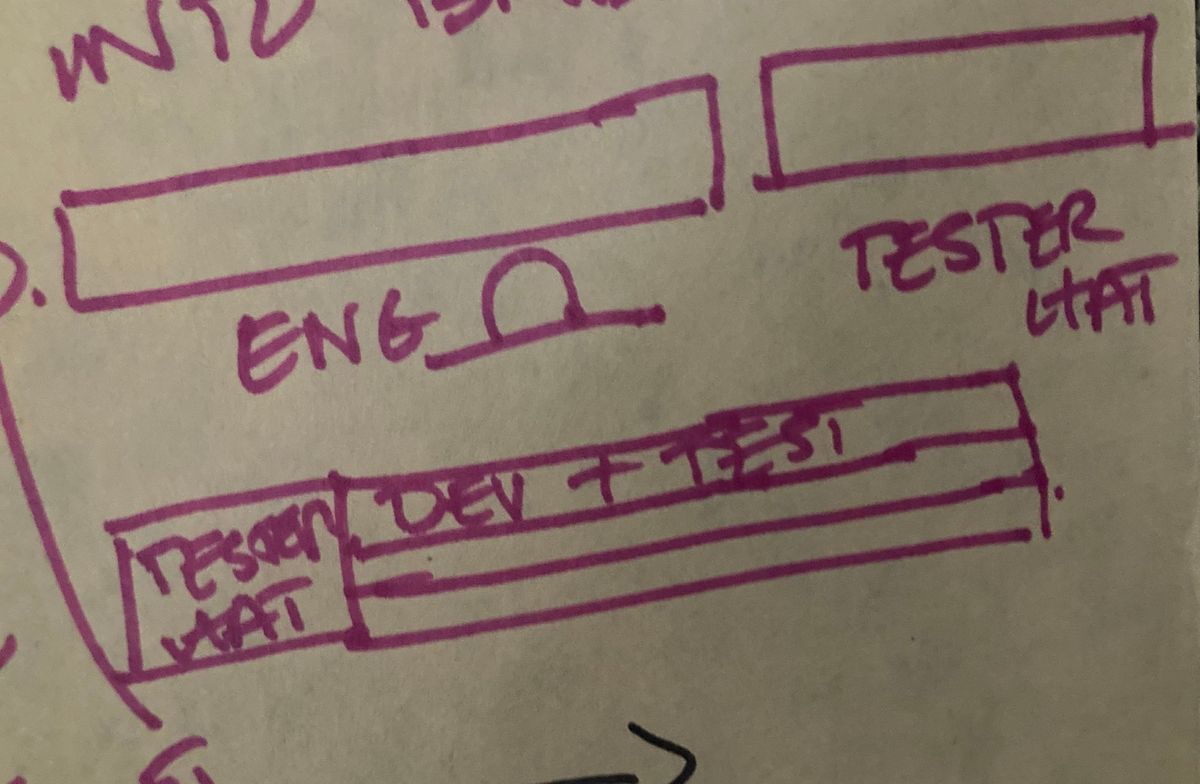

Unsurprisingly, software engineers who own the whole feature lifecycle also think in software testing phases. When they pick up a story, they put their developer hat on writing both feature code & unit tests. Once a number of stories are complete, they assume the mantle of a software tester. They then begin to think about how the testing will be performed.

But it doesn't have to be this way. In fact, I'd urge them to think otherwise. To think about testing & development linearly, they fail to benefit from the efficiencies that owning both 'phases' brings.

Owning feature delivery means you get to determine how and when you test. Yeah, sure, you can test in phases, but what if there was a better way?

What if, before software development, they planned out how and what they would test? How might that change how they develop your software?

What if during development, instead of having a story definition of done that ended at unit testing, they expanded it to include testing in production for a whole range of quality attributes? How might that change how they develop software?

What if, prior to software development, they outlined the SLOs and critical user journey, identified open telemetry and used that to help provide observability as they developed instead of only in production? How might that change how they develop your software?

I could go on, and I bet you could add a range of additional possibilities.

Here's my advice. Stop thinking about testing in phases and begin thinking of software testing as an activity. Something performed in parallel to your coding instead of after coding.

Think of it like breathing. You don't breathe in 10 times and then out 10 times. You breathe in, and then you breathe out, repeating until you don't.

Software engineering is no different.

Comments ()